LLM Guardrails Service

Deploy LLMs with confidence. Our guardrails service provides multi-layered safety, compliance, and reliability protection for your AI applications in production.

Get Started

What are LLM Guardrails?

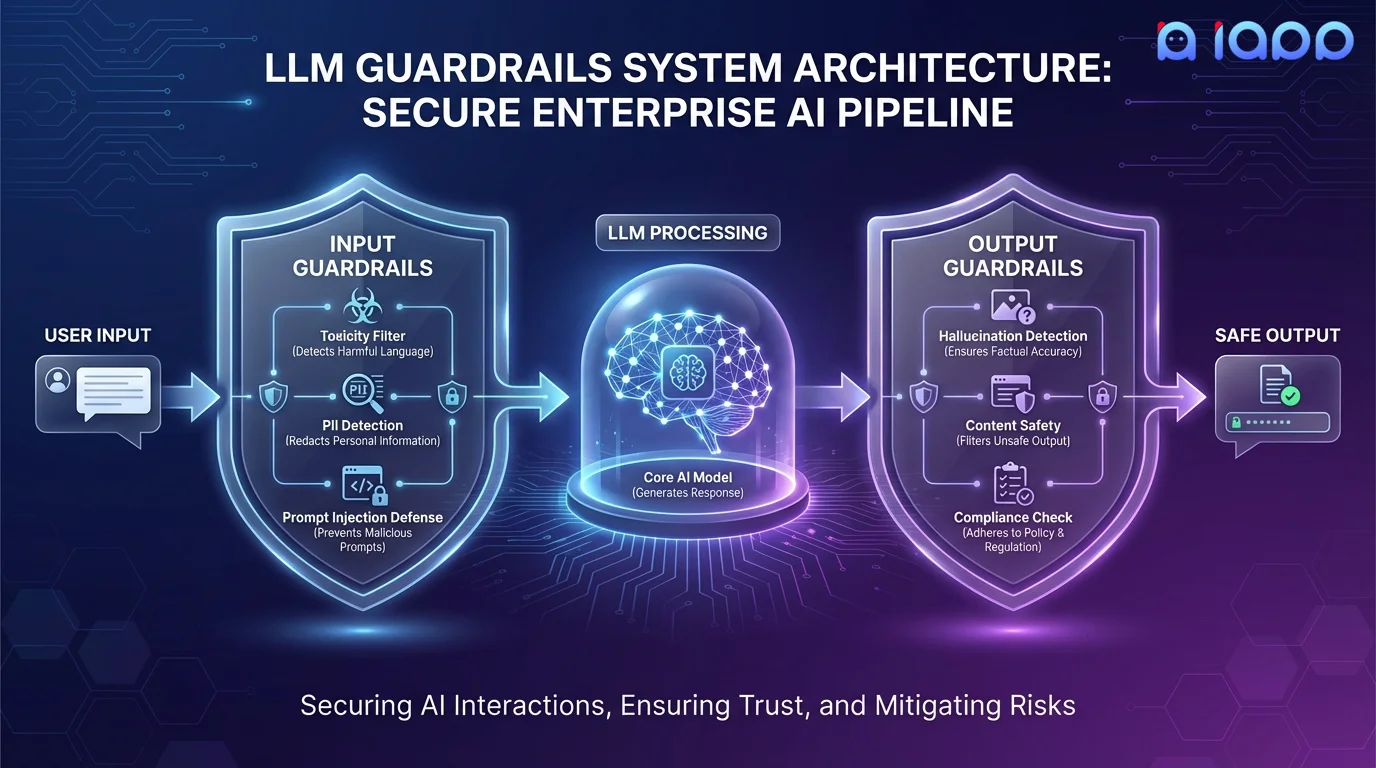

LLM Guardrails are safety mechanisms that monitor, filter, and control the inputs and outputs of your language model in production. They act as a protective layer between your users and the LLM, ensuring that the model behaves safely, accurately, and in compliance with your business rules and regulations.

Our guardrails operate at multiple levels: input guardrails screen user requests for harmful intent, prompt injection, and policy violations before they reach the model. Output guardrails check the model's responses for hallucinations, toxic content, PII leakage, and compliance issues before they reach the user. Together, they create a robust safety net for enterprise AI deployment.

Guardrail Capabilities

Multi-layered protection for production LLM deployments

Content Safety Filtering

Detect and filter toxic, harmful, offensive, or inappropriate content in both inputs and outputs. Customizable thresholds for your use case.

PII Detection & Redaction

Automatically detect and redact personally identifiable information (names, IDs, phone numbers, addresses) to ensure PDPA/GDPR compliance.

Prompt Injection Defense

Detect and block prompt injection attacks, jailbreak attempts, and adversarial inputs that try to bypass the model's safety constraints.

Hallucination Detection

Cross-reference model outputs against trusted knowledge bases to detect and flag fabricated facts, made-up citations, and incorrect information.

Compliance Enforcement

Ensure model outputs comply with industry regulations, company policies, and content guidelines. Customizable rule sets for financial, medical, and legal domains.

Monitoring & Analytics

Real-time dashboards showing guardrail triggers, safety metrics, and trend analysis. Alert on anomalies and policy violations.

Topic Boundary Control

Keep the model on-topic by detecting and redirecting off-topic queries. Prevent your customer service bot from discussing politics or competitors.

Output Format Validation

Validate that model outputs conform to expected schemas, JSON structures, or response templates before delivering to downstream systems.

How It Works

End-to-end guardrail implementation for your AI pipeline

Policy Definition

Define your safety policies, compliance requirements, and acceptable content boundaries

Guardrail Design

Design the input and output guardrail pipeline with appropriate filters and validators

Integration

Integrate guardrails into your existing LLM pipeline with minimal latency overhead

Testing & Red-Teaming

Rigorously test guardrails with adversarial inputs and edge cases to ensure robustness

Monitoring Setup

Deploy monitoring dashboards and alerting for ongoing guardrail health and effectiveness

Use Cases

Essential for any customer-facing AI application

Customer-Facing Chatbots

Ensure your chatbot never generates inappropriate, offensive, or harmful content when interacting with customers.

Healthcare AI

Enforce medical disclaimers, prevent dangerous health advice, and ensure HIPAA-compliant information handling.

Financial Services

Prevent unauthorized financial advice, ensure regulatory disclosure compliance, and block insider information leakage.

Content Generation

Filter AI-generated content for brand safety, factual accuracy, copyright concerns, and tone consistency.

Internal Knowledge Assistants

Prevent confidential data leakage when employees use AI assistants that access internal documents and proprietary information.

Education & E-Learning

Ensure AI tutors provide age-appropriate content, avoid giving direct answers to homework, and maintain academic integrity standards.

Why Choose iApp Technology?

Thailand's leading AI company with proven LLM expertise

World-Class Infrastructure

We operate NVIDIA H100, B200, and GB200 supercomputers. Our guardrail models run alongside your LLM with minimal added latency, powered by our high-performance GPU infrastructure.

Proven Track Record

We are the makers of production LLMs trusted by enterprises across Thailand and Southeast Asia. We know how to build safe, reliable AI systems.

Pricing

Project-Based Pricing

Guardrails pricing depends on the number of safety layers, custom model training needs, integration complexity, and monitoring requirements.

- ✓ Free initial consultation and risk assessment

- ✓ Custom guardrail model training

- ✓ Red-teaming and adversarial testing

- ✓ Ongoing monitoring and support